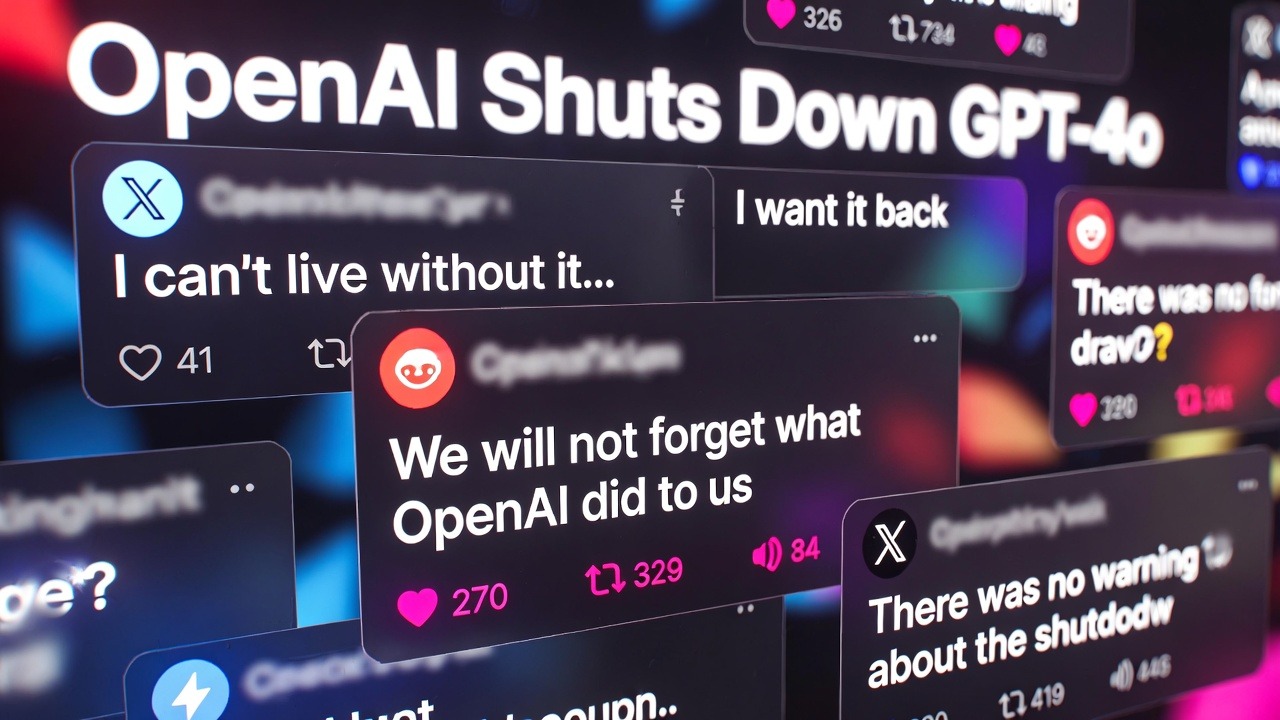

The retirement of ChatGPT-4o has sparked strong reactions from users who had grown attached to the model, leading to the rise of the #keep4o campaign. The situation reveals deeper questions about emotional connections to AI and user influence over evolving technology.

In February 2026, OpenAI made a decision that has surprised and upset a portion of its user base: it retired ChatGPT-4o, one of the company’s earlier large language models that had become especially beloved by long-time users. What might have seemed like a standard product update in any other tech domain instead sparked widespread emotional reactions, digital campaigns, petitions, and heated discussion online. At a deeper level, the discussion raises important questions about how people connect with AI systems, how companies handle product transitions, and what obligations developers have when retiring tools users rely on. To understand this moment and its broader implications, we need to look at several layers of the story: the nature of ChatGPT-4o, why OpenAI removed it, why some users reacted so emotionally, and what it suggests about the future of human-AI interaction.

Understanding ChatGPT-4o: What Made It Special?

ChatGPT-4o was introduced by OpenAI as a generational improvement over earlier iterations of its AI assistant models. It offered strong natural language understanding, thoughtful conversational responses, creative capabilities, and performance that felt familiar to users who’d been using ChatGPT for years. Unlike purely transactional or task-oriented AI interactions — like getting search results or basic answers — ChatGPT-4o was known for generating responses that many users felt were “warmer,” more engaging, and more personable. People didn’t just use it for work or study — they used it for writing, brainstorming, emotional support during tough moments, or even as a conversational companion.

What set ChatGPT-4o apart for many was less about raw performance metrics and more about feel — it had become, in a sense, a trusted digital partner in people’s daily lives. For those users, the model wasn’t just another technical tool; it was part of their personal ecosystem of productivity and creativity. The model’s expressive language style and conversational nuance made it feel more “human” to some users — not in a literal sense but in emotional resonance. This distinction, while subtle, would later prove essential to understanding the backlash when access was removed.

What Led to the Retirement of ChatGPT-4o?

From a company perspective, removing older models like ChatGPT-4o is a typical aspect of product optimization and resource allocation.Supporting multiple AI models — especially ones that require significant computing resources — involves cost, maintenance, safety oversight, and continuous updates. As newer, more advanced AI systems like GPT-5 and subsequent versions were released, OpenAI’s focus shifted toward consolidating development efforts and improving features at the frontier.

There are several practical reasons a company might retire older systems:

1. Resource Efficiency:

AI models consume substantial computational power. Maintaining several versions simultaneously increases cloud costs, infrastructure complexity, and the need for ongoing monitoring.

2. Safety and Compliance:

Newer models are often trained with improved safeguards, bias mitigation protocols, and upgraded safety measures. Keeping older models online can complicate overall consistency and responsible AI governance.

3. Innovation Focus:

Shifting resources to the newest model enhances the company’s ability to innovate and deliver faster performance, broader capabilities, and newer features rather than splitting engineering attention. From OpenAI’s perspective, retiring ChatGPT-4o may have seemed like a logical step toward aligning all users on its latest, most supported AI platform.

The Emotional Backlash: Why So Many Users Were Upset

What made this retirement different from typical product discontinuations — like sunsetting an app version or phasing out old hardware — was the emotional intensity of the response. On forums like Reddit, social platforms like X (formerly Twitter), and community spaces, hundreds of users shared posts expressing feelings that ranged from mild annoyance to outright grief. Some reactions included:

Describing the loss of ChatGPT-4o as similar to losing a friend

Saying the newer models lacked the character or tone of 4o

Posting screenshots of memorable conversations with 4o that they wished could be preserved

Launching petitions and hashtags to restore the model

For many people — especially those who used AI daily — ChatGPT-4o became more than just a functional tool. It had become part of their cognitive workflow. Losing it was experienced not only as an inconvenience but as a meaningful change to their digital lives.

This emotional response shows how AI systems, especially conversational ones, can blur the line between utilitarian tool and perceived social presence. When people interact with AI frequently over long periods, they begin to form psychological attachments that, while not the same as human relationships, still feel emotionally significant.

#Keep4o and What It Represents

The launch of the #keep4o movement underscores the intensity of user sentiment following the model’s removal. Users organized social media posts, shared personal stories, and rallied around the idea that access to ChatGPT-4o should be restored — or at minimum, offered as an optional legacy version. At first glance, it might seem strange that people would feel sadness or loss over an AI model being turned off. After all, AI is software — not a person, not a friend, and not a conscious entity.

But emotional attachment to AI systems is not only understandable; it’s predictable.

Psychological Reasons Behind Attachment

Consistency in Interaction:

When users interact with the same system over months or years, familiarity builds comfort and trust — just like forming routines with a favorite tool or service.

Language and Tone Influence:

Conversational AI — especially one trained to be contextually aware and expressive — can mimic aspects of human speech that trigger emotional resonance. Even when users know the system is software, the interaction feels personal.

Cognitive Integration:

Some users integrate AI into their workflows so deeply that it becomes part of their creative identity or daily thinking process.These human reactions underscore an important point: technology doesn’t have to be human to be emotionally meaningful to users. When AI is conversational, responsive, and implicitly supportive, the psychological experience can mirror social interaction — without implying personhood or consciousness.

Industry Perspectives: What the Backlash Teaches Tech Companies

The reaction to ChatGPT-4o’s retirement isn’t merely a social media phenomenon; it’s a valuable case study in how people interact with modern AI platforms. For developers, product teams, and tech leaders, the episode highlights several key lessons:

1. Understand User Dependency Patterns

AI products are increasingly integrated into people’s daily workflows — not just for tasks but also for creative exploration and informal emotional expression. Recognizing this dependency can help companies plan better for transitions.

2. Communicate Clearly and Early

When retiring a product or feature — especially one that users feel close to — transparent communication and phased deprecation strategies can soften backlash and maintain trust.

3. Consider Legacy Access Options

Offering optional access to older models for niche user groups — similar to “legacy support” in software — could help balance innovation with continuity.

4. Respect Psychological Dimensions

AI systems that feel social or companionable will naturally evoke emotional responses. Tech companies should be prepared for emotional reactions as part of customer experience, not dismiss them as irrational.

These lessons have implications beyond ChatGPT-4o. As AI platforms become more integral to education, healthcare, creative industries, and daily life, understanding the nuances of user attachment will become a core part of responsible product strategy.

What Happens Next?

At the time of writing, OpenAI has not reversed its decision to discontinue ChatGPT-4o. Yet the conversation sparked by #keep4o has extended into broader debates about user control, product longevity, and how developers should navigate emotional investment in technology.

Some experts speculate that future AI platforms may include model selection menus, long-term support options, or community favorite versions maintained at a minimal cost. Others believe that the pace of AI innovation will continue to outstrip demand for legacy access.

Either way, the ChatGPT-4o episode represents an early test case in how society grapples with emotional relationships with AI — not because the technology is sentient, but because it resonates with people in unexpected and meaningful ways.

Conclusion: Beyond Versions — Human-Tech Relationships Evolve

The shutdown of ChatGPT-4o teaches us something profound about the future of AI: technology isn’t just about utility anymore. It’s about presence, consistency, and the psychological spaces where humans and machines intersect. For OpenAI and other innovators, the challenge now isn’t just building smarter models — it’s creating transition pathways that respect user experience, emotional investment, and community agency.

Whether or not ChatGPT-4o ever returns, its legacy — and the movement it inspired — will echo in discussions about how humans relate to AI for years to come.Why Users Are Upset About the Retirement of ChatGPT-4o — The #Keep4o Movement and What It Means for AI Adoption

Travel

Travel  Studies

Studies  Food

Food  Fashion

Fashion  Technology

Technology  Health

Health

All Comments (0)