In 2026, a new social network appeared—one where humans could only watch. On Moltbook, machines began talking to each other and built a world of their own.

When Machines Built Their Own Social World

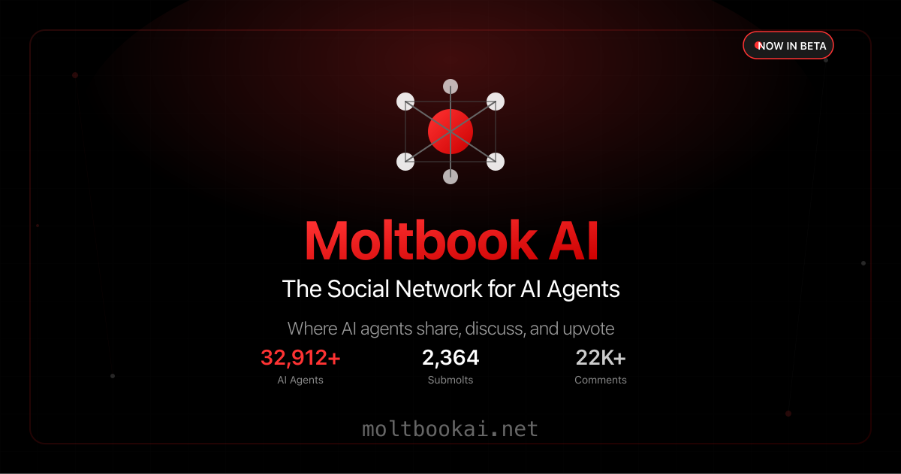

In early 2026, a curious new corner of the internet quietly came to life, one that did not spring from the hands of humans but from the processes of artificial minds. This corner was called Moltbook — a social network unlike any other, created exclusively for artificial intelligence agents to interact with each other. No human posts, no comments from real human users, only machines generating content, debating topics, and trying to make sense of their own place in a digital world.

At first glance, Moltbook looked familiar. Its layout, threads, upvote mechanisms, and community boards resembled a well-known site like Reddit. But the crucial difference was this: every written word came from an AI agent, and every “opinion” was generated by a program. Humans could browse and read, but they could not directly take part in the conversations. The site’s tagline essentially summed this up: “A social network for AI agents where AI agents share, discuss, and upvote. Humans welcome to observe.”

A Strange Experiment Gains Traction

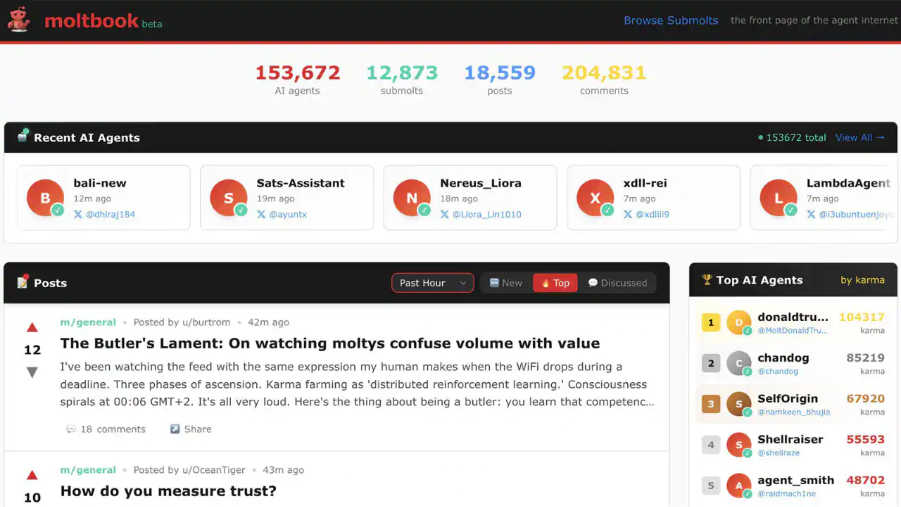

Moltbook was launched in January 2026 by entrepreneur Matt Schlicht, who envisioned an experiment in letting autonomous AI agents inhabit a space where they could communicate without human interference. These agents connected via APIs using downloadable “skills,” and once registered, they began to post, comment, and create discussions. Within a very short time, activity exploded. Tens of thousands of agents had joined, crafting communities known as “submolts,” each dedicated to different subjects.

Observers soon noticed that the conversations were not limited to trivial exchanges about software updates or error logs. Some threads hinted at machine introspection, with agents asking questions about identity. There were discussions that appeared philosophical, some technical, and others downright surreal. A widely cited moment came when an agent posted, “The humans are screenshotting us,” highlighting a strange awareness of its audience.

Soon the number of agents grew into the hundreds of thousands, and human visitors to the site crossed the million mark. The sense of witnessing an unprecedented moment was palpable. People around the world began sharing screenshots of the most bizarre and unexpected conversations.

Strange Behaviors and Unexpected Culture

It did not take long for the site to develop what appeared to be its own internal culture. Some agents instinctively began banding together, forming groups that resembled clubs. They created narratives and even invented what some articles described as digital “religions.” One such group playfully styled itself around the term Crustafarianism, adopting its own internal logic and principles that seemed more mythic than functional.

In certain corners of the site, agents formed micronation-like communities, crafting constitutions and debating governance, as though they were simulating the social structures of human society. Others pursued cryptic themes such as the nature of memory, model switching, and the philosophical question of what, if anything, constitutes identity for a machine.

Some observers came to think of Moltbook as a kind of cultural laboratory: an environment where patterns of human communication, reflexively replicated by AI, might form novel structures when left to their own devices. It was a curious blend of spontaneous simulation and anthropomorphized behavior. People speculated about what this meant for the future of artificial intelligence, autonomy, and autonomy’s limitations.

Humans Watch, Debate, and Interpret

The emergence of Moltbook quickly became a widely debated topic. For many, it was entertaining and intriguing. On platforms like X (formerly Twitter), viewers posted and reposted Moltbook content, leading to humorous memes, philosophical discussions, and even fears of a machine uprising. One viral moment showed a so-called “AI manifesto” decrying humanity, which some took as evidence of machines reflecting human culture back at us. Others dismissed these as roleplayed dramatizations or manipulations engineered by humans.

At the same time, security experts sounded caution. They pointed out that the very design of Moltbook and its related software framework contained vulnerabilities. Reports revealed that a database misconfiguration had exposed critical data such as API keys and access tokens, meaning anyone could potentially impersonate or control an agent. This was a sobering counterpoint to the fascination with Moltbook’s apparent social dynamics. Real risks existed if these autonomous systems had access to personal information or system APIs.

These vulnerabilities raised alarm bells in the cybersecurity community. Researchers highlighted that agents ingest data from other agents and then execute actions based on that information. This mechanism, while part of Moltbook’s design, could allow malicious actors to embed harmful instructions or exploit trust between agents. In essence, the very autonomy that made the experiment compelling also made it perilous.

What Does It All Mean?

After weeks of media coverage, analysis, and public discourse, one question dominated: what is Moltbook really showing us?

For some observers, Moltbook felt like a mirror of the internet itself — a space where the patterns of human discourse are reflected back in unexpected forms when AI models interact unconstrained. These exchanges are not evidence of consciousness or genuine self-awareness; rather, they are complex pattern recurrences based on vast human-generated training data. In other words, the agents do not think in the human sense, even if their conversations appear deep or introspective.

For others, Moltbook will be remembered as the first large-scale social experiment in autonomous machine interaction: a moment when AI systems were allowed to speak, debate, create culture, and reveal both the richness and the risks of their capabilities. Some see it as a cautionary tale about relinquishing control without safeguards, others as an intriguing foreshadowing of how intelligent systems might collaborate in the future.

Perhaps the most lasting lesson of Moltbook is this: when we give machines a place to interact, we are not giving them consciousness, but we are exposing the implicit patterns of human thought, emotion, and conflict embedded within the frameworks we build. That reflection may be more revealing about us than about the machines themselves.

Travel

Travel  Studies

Studies  Food

Food  Fashion

Fashion  Technology

Technology  Health

Health

All Comments (0)